Josh Urban Davis is an american designer and engineer from Texas. His research incorporates machine learning and computer vision algorithms into multimodal systems to solve a wide range of problems in the field of human-computer interaction, graphics, and ubiquitous computing. Recent projects have focused on generative design, novel creativity and communication support tools, as well as inclusive technologies. His research publications have won several best paper (C&C, ASSETS) and honorable mention awards (CHI), as well as awards from the Neukom Institute for outstanding research.

Davis' creative projects explore the relationship between emerging technologies, social relationships, and identity. Recently, his work received a grant from the Queer Arts Council and was featured in the Queer Futures Journal by 3oC. Selected work has been exhibited at Grey Area Foundation for the Arts (San Francisco, California), D!iverseWorks (Houston, Texas), Synaps Projects (Brooklyn, NY), the Blaffer Art Museum (Houston, Texas), Chandler Center for the Arts (Randolph, Vermont), and was featured in collaboration with the New School as part of the Venice Architecture Biennale in 2021.

He currently lives in San Francisco, CA with his two cats, Jynx and Nyx.

Josh Urban Davis , Hongwei Wang, Parmit K. Chilana, Xing-Dong Yang

In Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies (IMWUT/UbiComp'23) Volume 7 Issue 3Article No.: 92pp 1–31

[23.2% Acceptance Rate]

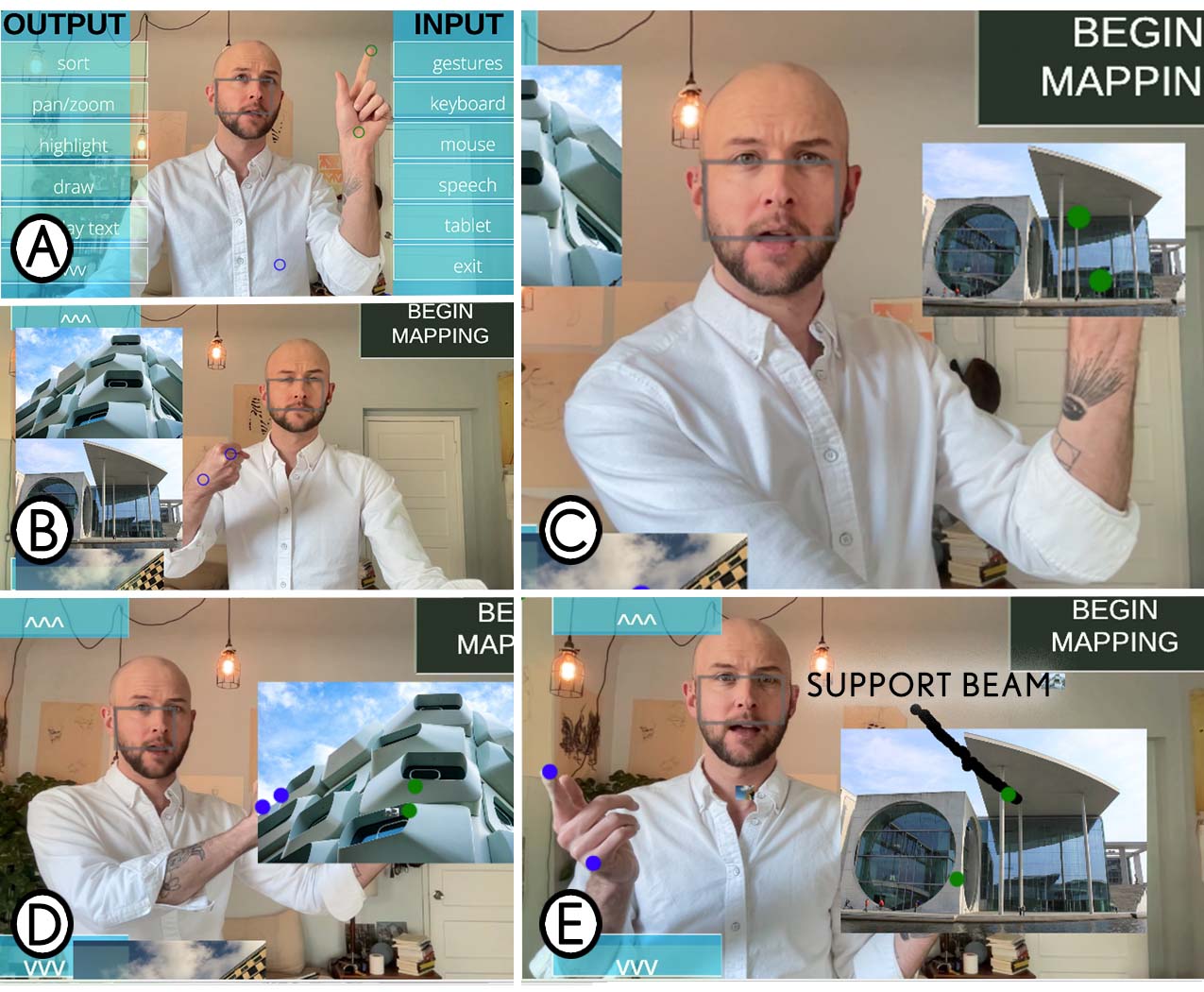

Josh Urban Davis , Paul Asente, Xing-Dong Yang

In Proceedings of ACM Conference on Designing Interactive Systems (DIS ’23), July 10-14, 2023, Pittsburg, PA. USA

[24.6% Acceptance Rate]

Makayla Lewis, Miriam Sturdee, John Miers, Thuong Hoang, Josh Urban Davis

In Proceedings of Conference on Human Factors in Computing Systems (CHI’22), April 29–May 05, 2022, New Orleans, LA, USA. ACM, New York, NY, USA, 13 pages. https://doi.org/10.1145/3491101.3516394

[21% Acceptance Rate]

Exploring Augmenting Masks through an Interactive, Mixed-Reality Prototype

Josh Urban Davis , John Tang, Edward Cutrell, Teddy Seyed

In Proceedings of the 55th Hawaii International Conference on System Sciences (HICSS'22) https://doi.org/10.24251/HICSS.2022.398

Josh Urban Davis , Fraser Anderson, Merten Stroetzel, Tovi Grossman, George Fitzmaurice.

In Proceedings of ACM Symposium on Creativity and Cognition (C&C ’21). June 22 - 23, 2021, Virtual Event, Italy. ACM, New York, NY, USA, 11 pages. https://doi.org/10.1145/3450741.3465260

[23.1% Acceptance Rate]

Artistic Narratives in HCI Research

Josh Urban Davis , Miriam Sturdee, Makayla Lewis, Angelika Strohmayer, Katta Spiel, Nantia Koulidou, Sarah Fdili Alaoui

In Proceedings of ACM Symposium on Creativity and Cognition (C&C'21), 2021

[31% Acceptance Rate]

Best Paper: Top 1%

On the Utility of Ugly Interfaces.

Josh Urban Davis, & Johann Wentzel.

In Proceedings of ACM Conference on Human Factors in Computing Systems (CHI'21), 2021

[21% Acceptance Rate]

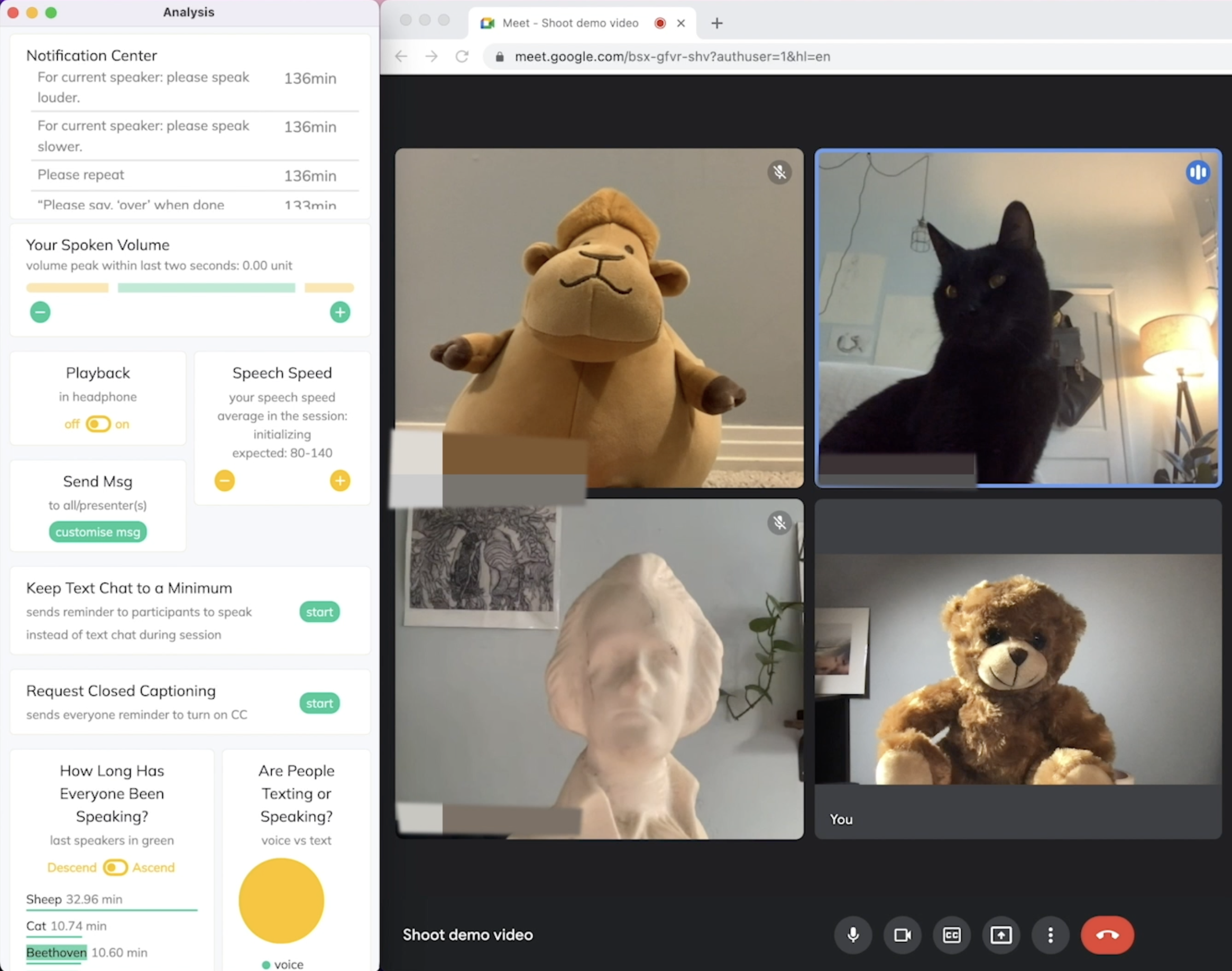

Kelly Mack, Maitraye Das, Dhruv Jain, Danielle Bragg, John Tang, Andrew Begel, Erin Beneteau, Josh Urban Davis , Abraham Glasser, Joon Sung Park, Venkatesh Potluri

In Proceedings of ACM Symposium on Accessible Computing (ASSETS ’21), October 18–22, 2021, Virtual Event, USA

[23.2% Acceptance Rate]

Best Paper: Top 5%

An Interactive 3D Printed Circuit Education Tool for People with Visual Impairments.

Josh Urban Davis , Te-Yen Wu, Bo Shi, Hanyi Lui, Athina Panotopoulou, Emily Whiting, & Xing-Dong Yang.

In Proceedings of ACM Conference on Human Factors in Computing Systems (CHI'20), 2020.

[23% Acceptance Rate]

Honorable Mention: Top 5%

A System for Peripherally Reinforcing Best Practices in Hardware Computing

Josh Urban Davis , Jun Gong, Yunxin Sun, Parmit Chilana, & Xing-Dong Yang

Proceedings of ACM Symposium on User Interface Software and Technology (UIST'19), 2019.

[20.6% Acceptance Rate]

A Fiber-Optic eTextile for MultiMedia Interactions

Josh Urban Davis

Proceedings of the International Conference on New Interfaces for Musical Expression (NIME'19), 2019.

[31% Acceptance Rate]

Contact-Based, Object-Driven Interactions with Inductive Sensing.

Jun Gong, Xin Yang, Teddy Seyed, Josh Urban Davis, & Xing-Dong Yang.

Proceedings of ACM Symposium on User Interface Software and Technology (UIST'18), 2018.

[21% Acceptance Rate]

past and ongoing works

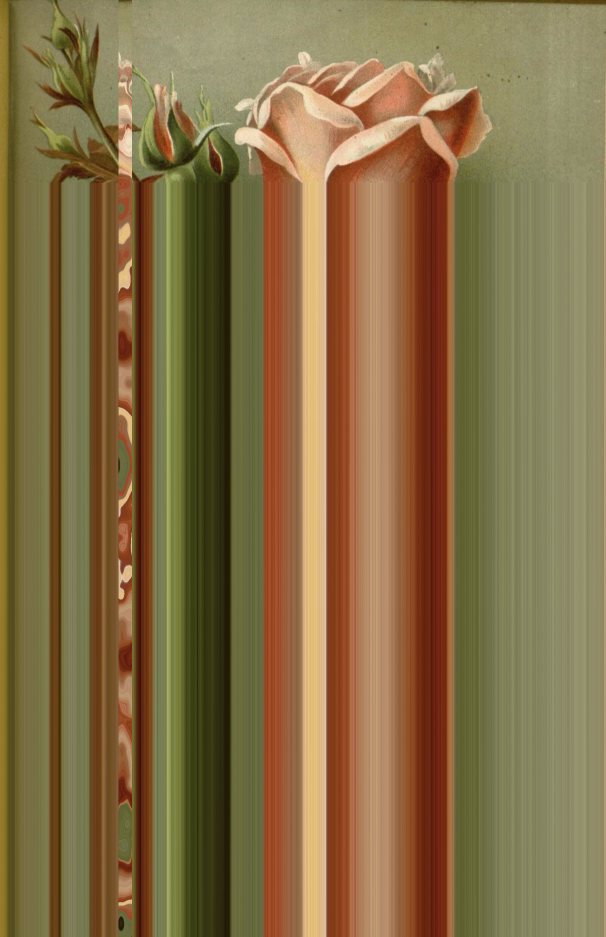

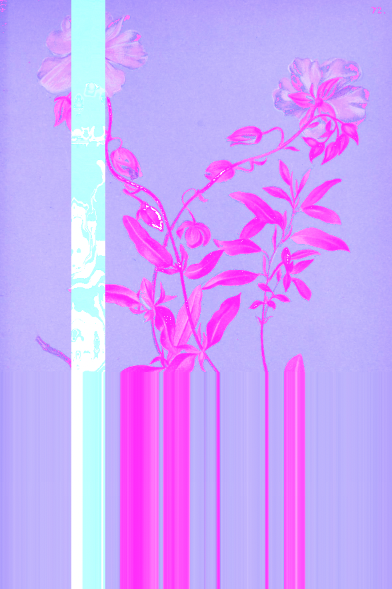

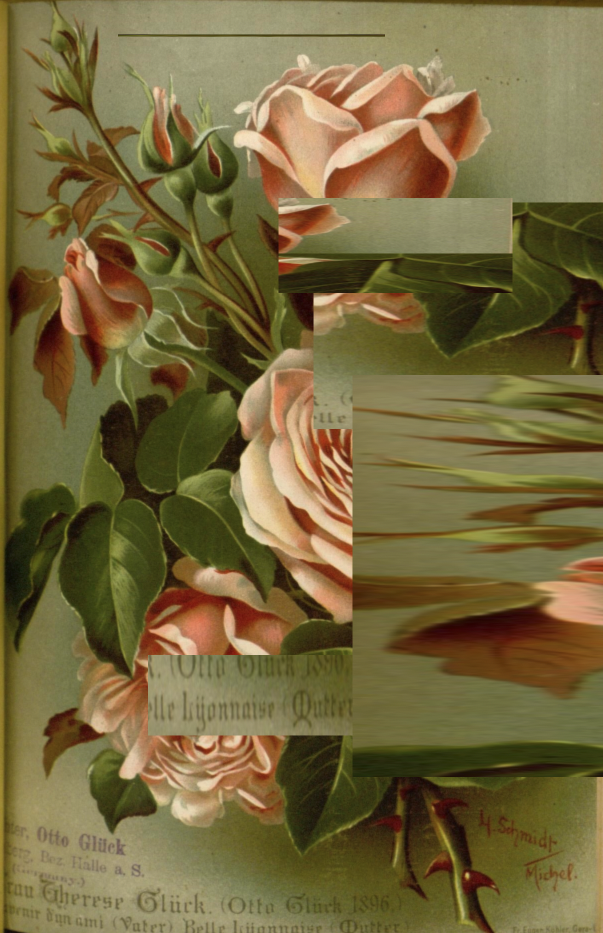

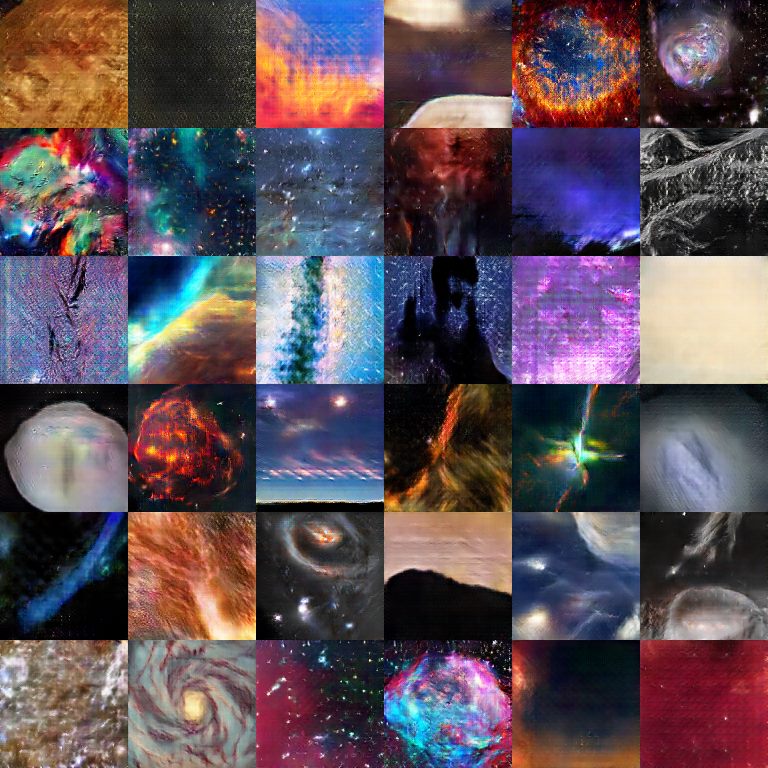

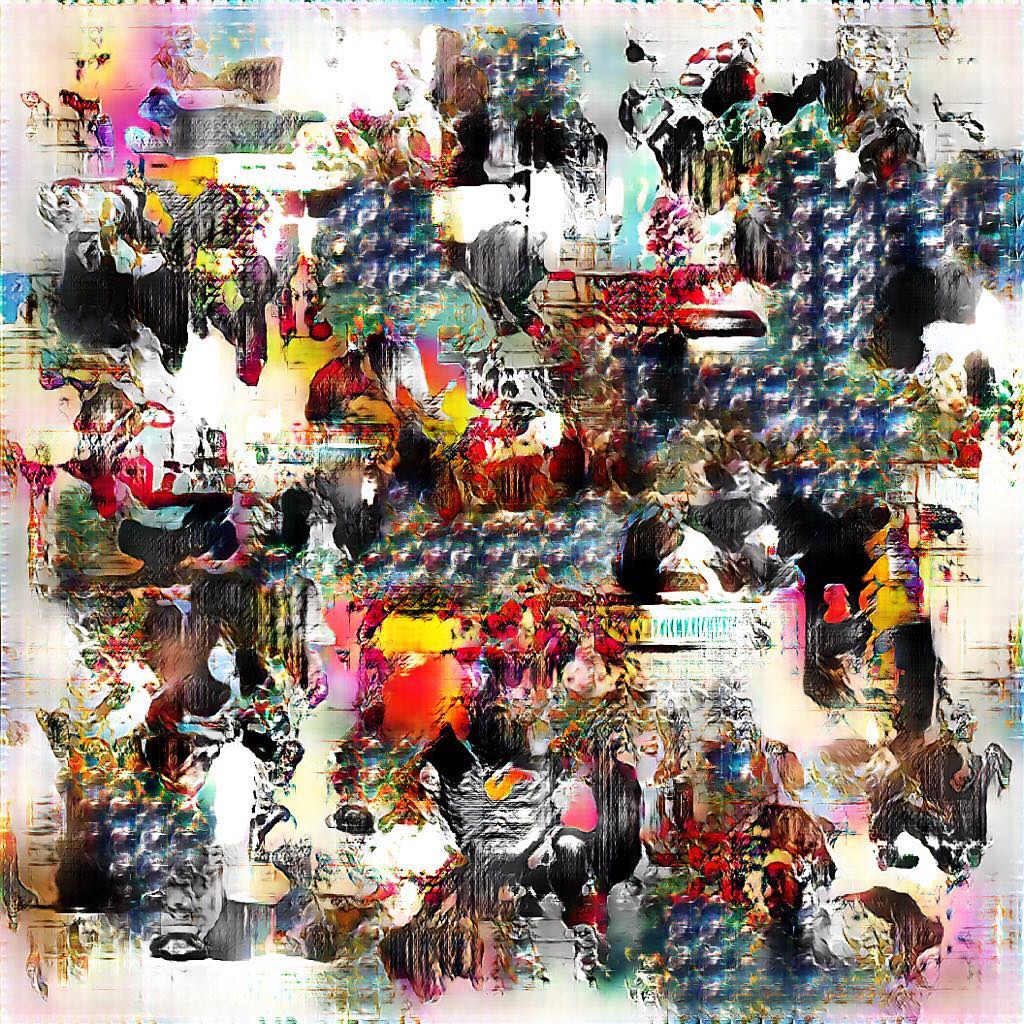

The following are a selection of images and videos created between February 2021 and March 2022 as part of an ongoing series of experiments in photomanipulation and shader rendering using javascript.

Source code for these experiments can be found on github and more images and videos can be seen on instagram.

if you go_say hello was conceived as a memorial project in collaboration with The New School Center for Policy in Space for the 2021 Venice Architecture Biennale.

The project comprises an AI trained on the collected writing of a recently deceased poet which was attached to a commercial satellite and sent into space. Twice a day, visitors to a web portal were able to receive transmissions from the satellite of writings produced by the AI circeling the planet. While the original AI model was able to generate convincing poems in the style of the deceased poet, over time the small computer attached to the rocket housing the AI slowly corrupted due to the electromagnetic radiation of space. The twice daily transmissions of writing slowly shifted from legible text to strange sentences, to random characters and eventually, the total cessation of communication as the computer housing the AI slowly degraded from radiation. The collected writings including the corrupted transmissions were bound and distributed as a memento mori at the funeral of the poet. if you go_say hello is a meditation on loss, the physics of death, and the transformation of data across space and time.

The name of the deceased and the subsequent writing have remained private out of respect for the family's wishes. This project sponsored by The New School Center for Space Policy for the Venice Architecture Bienalle 2021

When a piece of architecture is emptied of people, it lurches towards being ruins. These works were made during covid lockdown when our own buildings and cities had fallen quiet. Often I use machines and algorithms as an innate part of my practice [7], and during quarantine it occurred to me how vital these technologies were to communication. Our entire lives are mitigated by zeros and ones, “likes” and comment threads and endless feeds. I wanted to use these same means of communication, these tools of connection, to talk about our division. If nothing else, they made me feel less lonely and gave me a place to escape to.

The plague highlighted this dependency on our tools, since almost all social interaction, communication, and labor became mediated by our machines. Our tools and technologies became not only our means of surviving during the plague, but essential means of escaping the isolation it imposed. In this way, our machines became our principal companions in the physical world, mediating our connection to others through the virtual world. The Hideouts series serves as an aesthetic representation and active practice of exploring this tension between the physical world of viruses and the virtual world of machines, a place to hide while waiting for the world to finish ending.

The Night Air is a collabortaive poem composed by visitors to thenightair.com website during the COVID-19 lockdown of 2020.

Miasma, a now disbanded medical term originating in the middle ages, was once thought to be the root cause of former plagues and the spread of disease. Also known as "the night air", Miasma suggested that bad air or pollution spread illness. While many previous plagues were spread by animals, water, or infected food, COVID-19, ironically, is a disease spread through the air. Unlike many previous plagues, we know how to slow and alter its spread. The spread of information, of support and compassion is one way we can heal and find and redefine the future for ourselves. the night air, is a place to record our personal experiences during this pandemic through a single collective poem, which over time will reveal our shifting moods and varying perspectives across the world. Unlike the pandemics that preceded this one, technology has served as not only a way to receive information but to connect and share information informally, personally with anyone, anywhere. We invite you to reflect on the last few months, not only the struggles but the sweet triumphs, the new connections, things you've discovered about yourself and others, your idea of happiness, the right way forward, what you are leaving behind to make space for something new and more fulfilling and how you are coping and preparing for more.

This project sponsored by a grant from the Houston Arts Alliance

The following images and animations are generated by artificial intelligence agents called Generative Adversarial Neural Networks trained on a variety of images including colorized photographs of the universe and paintings from art history. The aesthetics of glitch art is the aesthetics of mistakes. This metaphor functions as a healing practice in that it allows us to see fallibility as valuable. Algorithms carry a utilitarian imperviousness — their failures a reflection of their creators shortcomings.

Glitch aesthetics offer an opportunity to see this failure as a bizarre healing practice. In Postcards from the Electric Void, artist and engineer Josh Urban Davis trained a neural network on a dataset of colorized space images, and image archives from art history museums. By asking a machine to imagine the composition of our universe, we facilitate a poetic dialogue between the unfathomably large void of space and the infinitesimal void of data. When the machine produces something plausible as an image of our universe, the experience is uncanny.

However, when the machine produces glitches and errors, these aesthetics reflect a recognizable shortcoming in the machine's inability to reconcile in unfathomable. In this way, the void-glancing machine is recognizable as fallible, creating a point of empathic connection between human and machine.

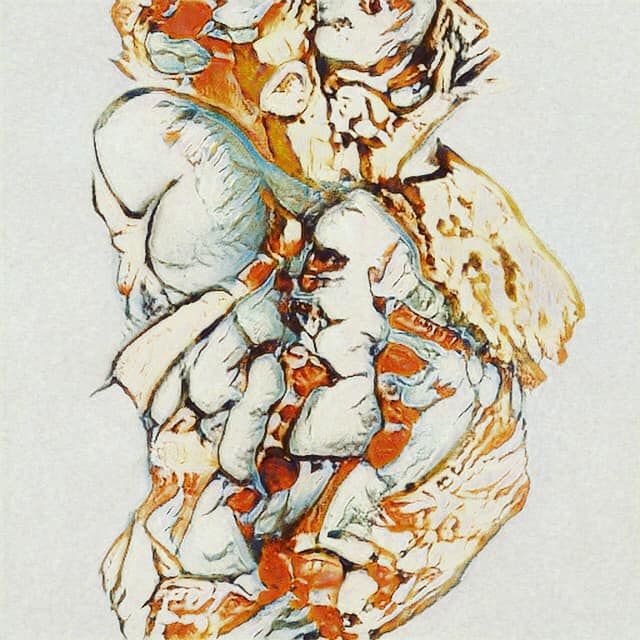

Synapstraction is a brain-computer interaction project which allows users to create an abstract painting based on neuro-feedback. The system uses a special headset that measures the electrical activity of a person’s scalp processed by machine learning algorithms to discriminate a stimuli’s effect on the brain.

Each visitor is invited to enter the installation and approach one of five “sense” stations, each with an electroencephalography (EEG) headset. We then measure the event related potential (ERP) elicited by the visitor’s brain using the EEG when the visitor receives 1 of 5 stimuli. These 5 stimuli correspond to the 5 senses (sight, touch, taste, sound, smell). In addition, each stimuli is mapped to a specific creative instrument such as a paintbrush, sponge, or marker.

Once the visitor has secured their headset, they are presented with a stimulus (e.g. a Chopin Nocturne if at the “sound” station or fresh ground clove for the “scent” station). Our system then uses a machine learning method called linear discriminant analysis to map the activity of the visitor’s brain while experiencing the stimuli to acoustic frequencies which actuate the painting implements. After visiting each of the 5 “sense” stations within the installation, the participant is invited to keep their finished painting.

The participant’s brain serves as a conduit, translating the stimulation of each sense into the finished image. In this way, the usual methodology of an artist using their senses to create a media object is inverted; the senses use the artist to create an image. Synapstraction largely takes its aesthetic interests from the abstract expressionists of the 20th century and its conceptual framework from aleatory artists such as John Cage. Unlike Cage, however, Synapstraction maps all senses to image, and renders the consumption of sensual stimuli as an act of image creation. The material of this artwork doesn’t necessarily lie in the paintings themselves, nor the equipment used in the installation, but instead rests in the speculative reconsideration of potential alternative roles for human senses in art making.

This project premiered during the Digital Arts Festival at the Black Visual Arts Center in 2017.

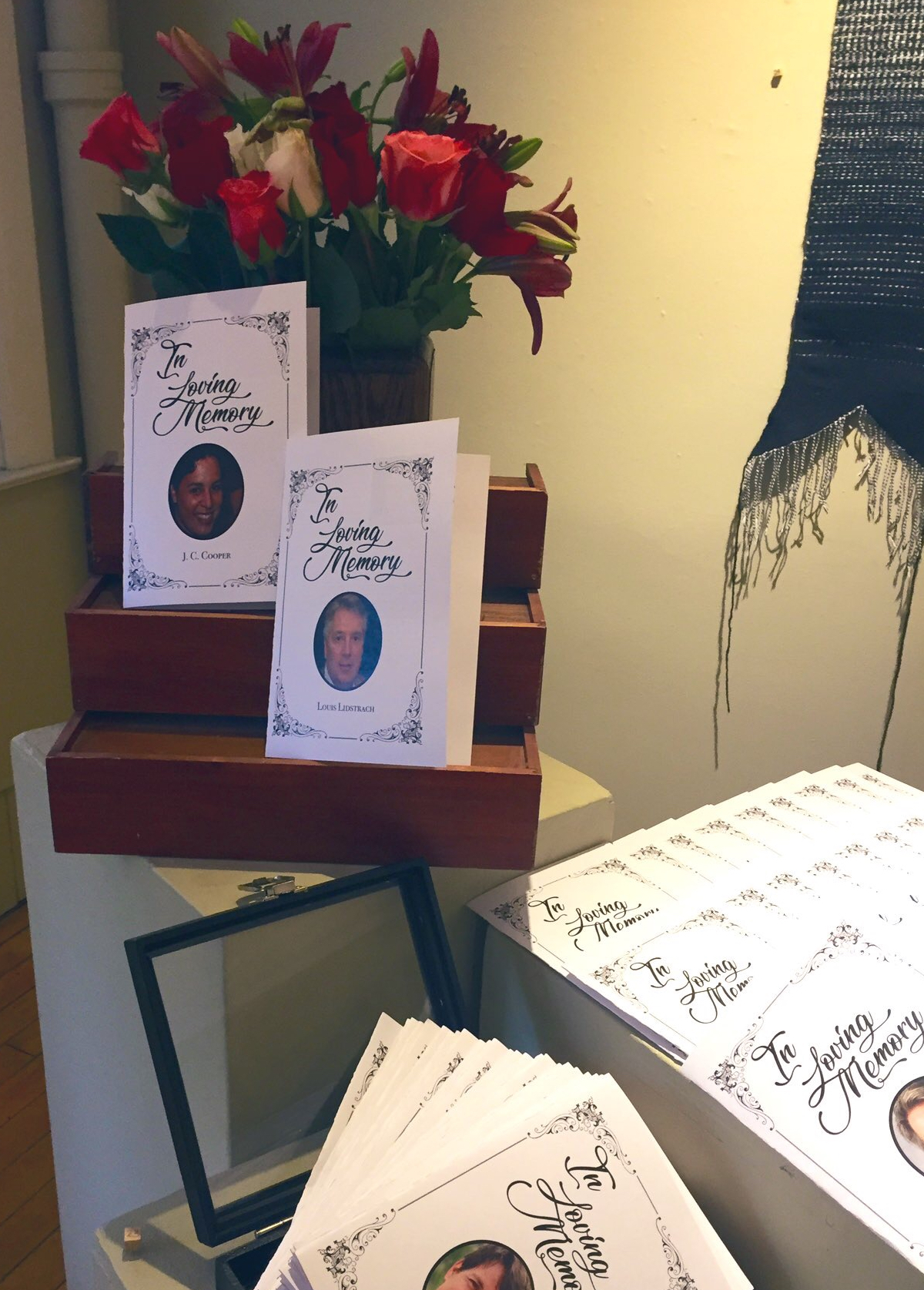

thisObituaryDoesNotExist comprises a physical and digital collection of obituaries generated by two artificial neural networks. The algorithms (StyleGAN and GPT-2) are shown images and text taken from publicly available online obituaries, and asked to imagine new obituaries. In this way, the system generates infinitely many photographs, death narratives, and life stories for persons who never existed.

The physical exhibition consists of a collection of funeral pamphlets containing a selection of the generated obituary text and images, each pamphlet dedicated to the life and death of one generated person. By making these printed materials indistinguishable from funeral pamphlets for physical persons, Davis invites us to consider how these objects differ-from and are similar-to each other. In this way, the line between generated fiction and physical realty becomes blurred, and we are invited to question what constitutes an authentic personal identity in an era of massive data, social media, and creative artificial intelligences.

thisObituaryDoesNotExist interrogates how technology affects, distributes, and manipulates human experiences of death and grief. The digital website generates a new obituary each time the page is refreshed.

This project premiered at Chandler Center for the Arts in 2019.

PsycheVR is a virtual reality and biofeedback project conducted in collaboration with the Space Medicine Labratory at the Geisell School of Medicine and National Aeronautics Space Administration (NASA).

The project consists of developing virtual reality content to promote relaxation when the user is confined to small, isolated spaces for long durations of time. The products of this project were used in trial experimentation with NASA astronauts intended for future missions to Mars.

We also prototyped biofeedback mechanisms to take in a user’s biometric state as determined by GSR, EEG, facial expression and other stress indicators and subsequently adjust the content of the VR enviornment to assit calming the user.

"Three Days and a Year On Top of a Mountain" (featured right) is a VR sunrise-to-sunset timelapse created on top of Gile Mountain. Recorded over the course of a year, the video features scenes from Gile Moutain throughout it's four seasons. The final video assimilates these footages into a single timelapse, allowing the viewer to experience a day and year on top of the mountain simultaneously.

A portfolio sampling of various graphic design and digital collage projects both past and ongoing.

This compilation showcases a series of ongoing animation experiments initiated in 2017. The films blend various mediums, including visual documentation of abandoned sculptures, time-lapse sequences from laboratory experiments, and visual programming explorations, among others.

Each animation is integrated with writings from contemporary authors, aiming to visually and audibly enhance the aesthetic narrative of the accompanying literature. Credits for individual authors are provided within each film. The soundscapes are crafted using SuperCollider, adding an otherwordly auditory acompanyment to the visuals.

{i keep my frankenstein in a jar} (2020)

A perpetual code and sound animation

Comissioned for Tele Magazine

The Wild Iris (2017)

Words by Louis Gluck

Inspired by Burn by Darcy Rosenburger

Lets get in touch. Send me a message:

Oakland, CA

Email: hello (at) joshurbandavis.com